76

Unique participants tested across two model generations

11+

Distinct cultural and ethnic backgrounds represented

1.5M+

PPG data points collected under ground-truth conditions

0.3%

Weight-scale accuracy for ground-truth hydration change

01

Every vital sign has a sensor. Hydration doesn't.

Heart rate has the pulse oximeter. Blood oxygen has SpO₂. Glucose has the CGM. Temperature has the infrared thermometer. Hydration — arguably the most volatile variable in human physiology — has urine color charts, thirst perception, and the occasional blood draw. None of them is accurate, seamless, or reliable across populations.

Aqoir is building the missing sensor. The approach is photoplethysmography — the same optical technique behind pulse oximetry — combined with machine learning on waveform features that change with tissue hydration. This approach is well-grounded in peer-reviewed research (Kimball 2022, Reljin 2018), but no one has yet shipped it as an accurate, seamless consumer-grade measurement. That's the category we're building.

02

A dataset that reflects the world the device will live in.

Measurement categories are built on diverse data, not convenient data. Our 76-participant cohort spans 11+ cultural and ethnic backgrounds, ages 12 to 81, varied fitness levels, and real-world daily routines. This diversity is deliberate — a hydration sensor that only works for one demographic isn't a sensor, it's a demo.

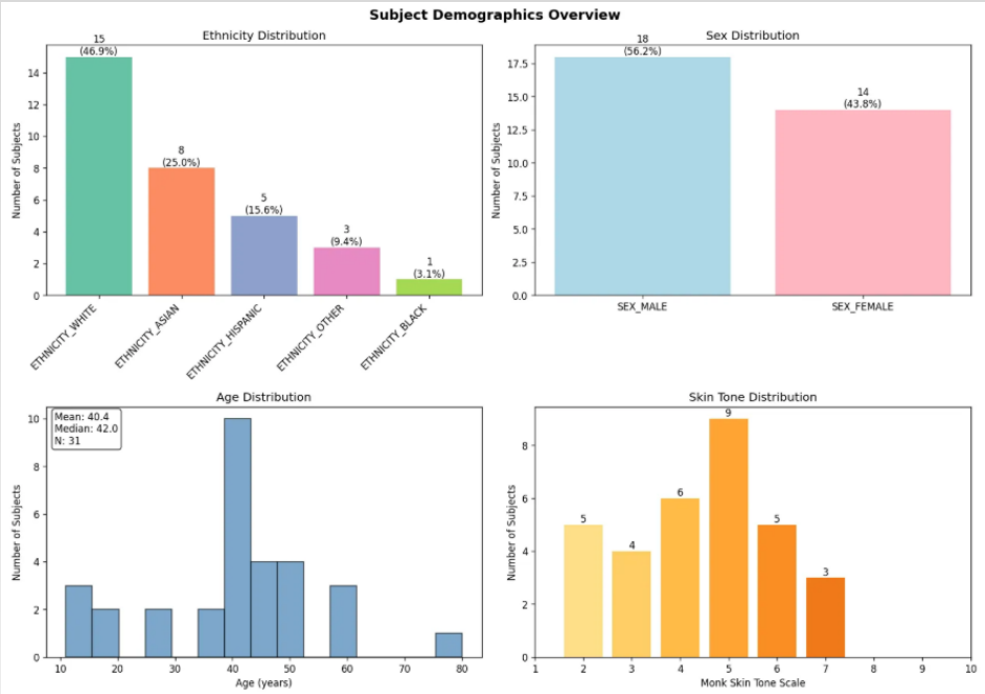

The Cohort

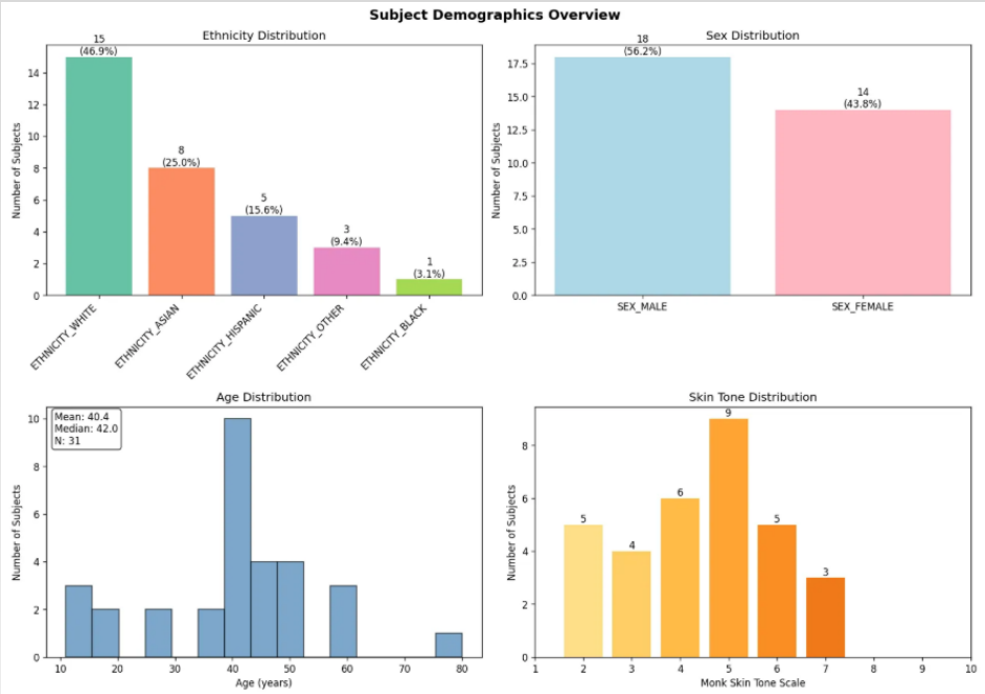

Subject demographics — ethnicity, age, sex, and Monk skin-tone distribution across the 76-participant study.

Subject demographics across the cohort that trained and validated both model generations. Diverse ethnicity, age span 12–81, and a full spread of Monk skin-tone scale — critical for PPG validity across skin pigmentation.

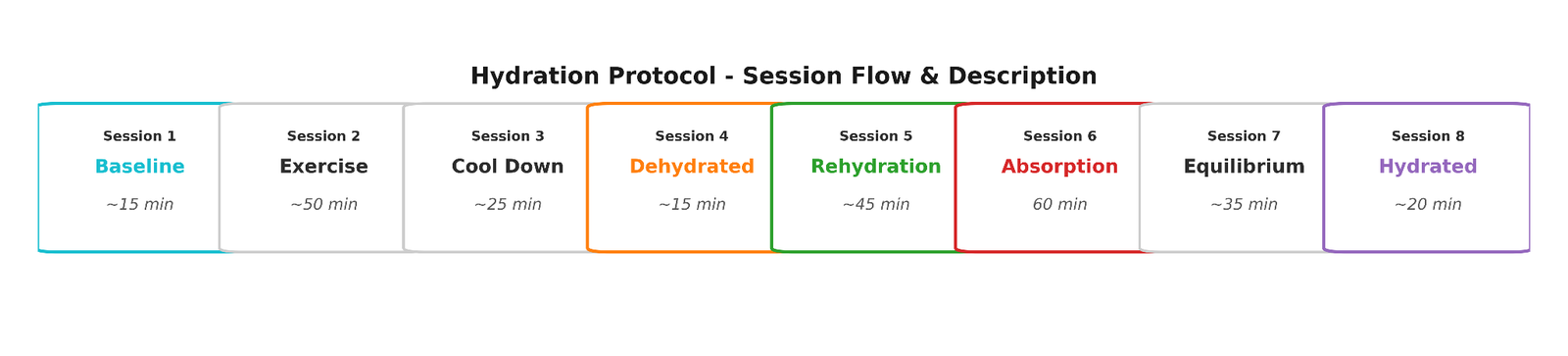

The Protocol

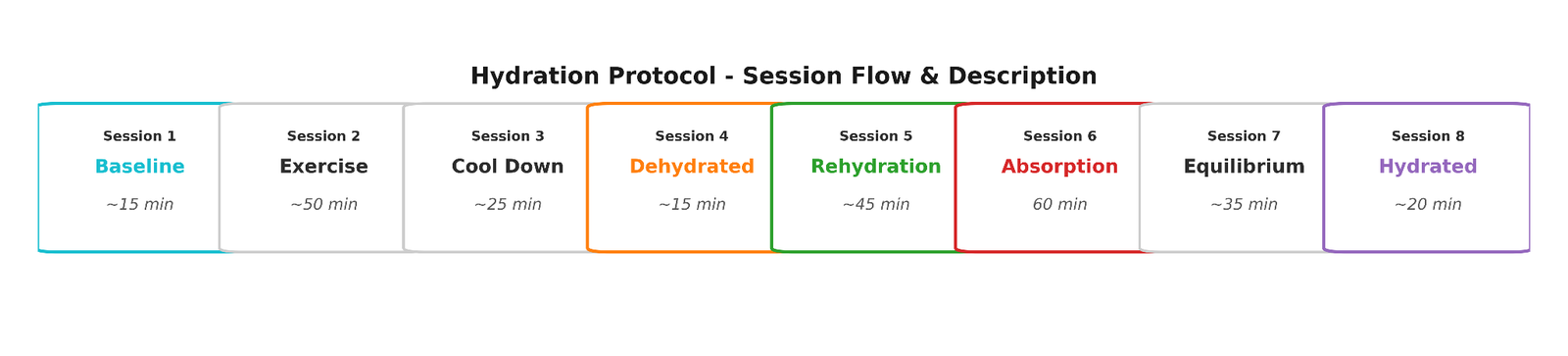

An 8-session hydration protocol that produces ground-truth dehydration and rehydration curves for every participant.

Every participant runs through a structured exercise → dehydration → rehydration protocol. Ground-truth hydration change is measured against weight scale (0.3% accuracy) and urine specific gravity. This gives us precise labels at every time point — the foundation on which both model generations were trained.

03

v1 Yampa — a working algorithm on consumer-grade hardware.

Our first-generation model ("Yampa") proved something significant: engineered PPG waveform features, processed through a machine-learning pipeline running on non-specialized hardware, can track hydration state across a demographically diverse cohort. 95% of the 60 analyzed subjects showed positive correlation between Yampa's predictions and weight-scale ground truth — strong peer-review-grade validation of the core measurement hypothesis, and the foundation Rio Grande builds on.

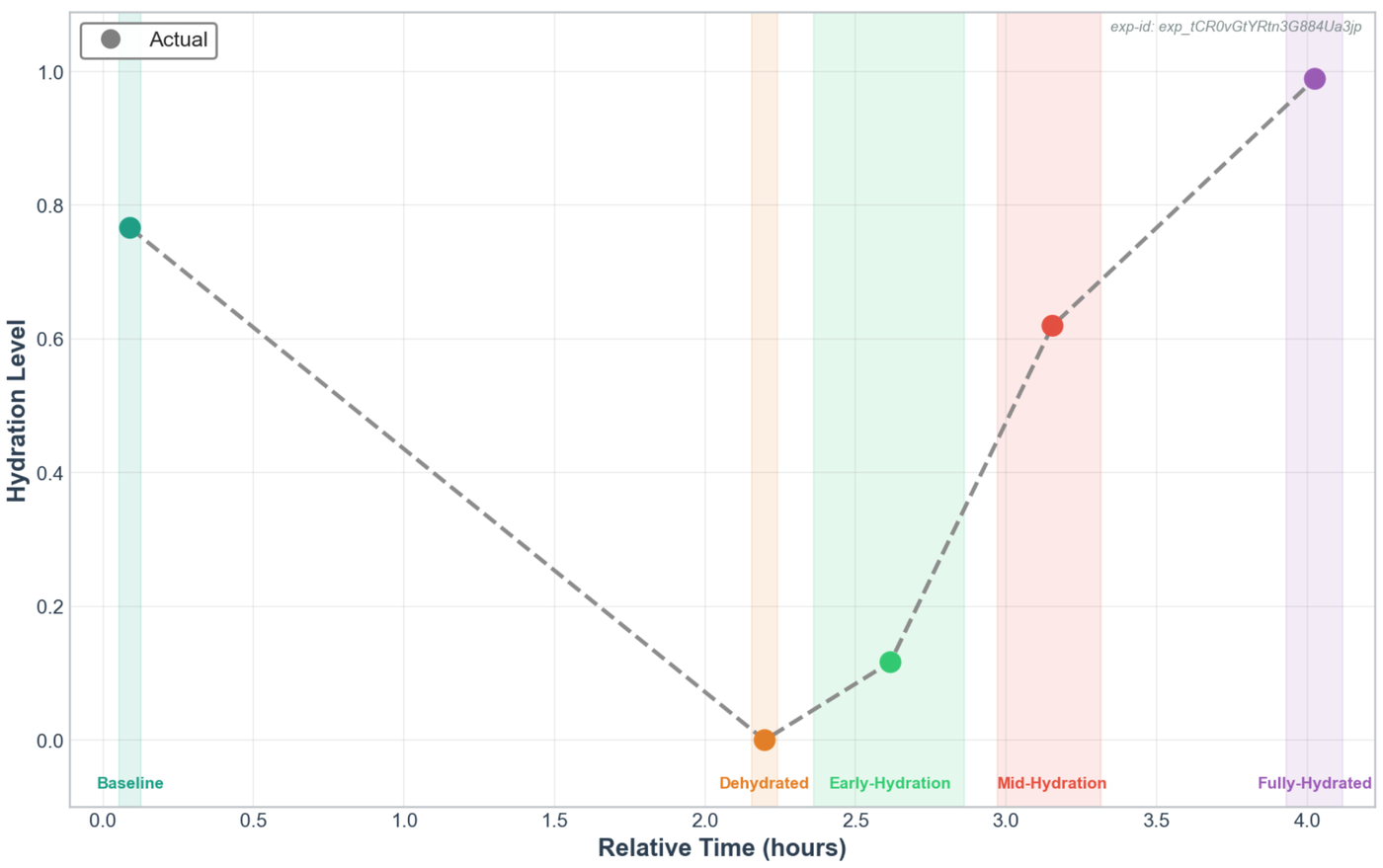

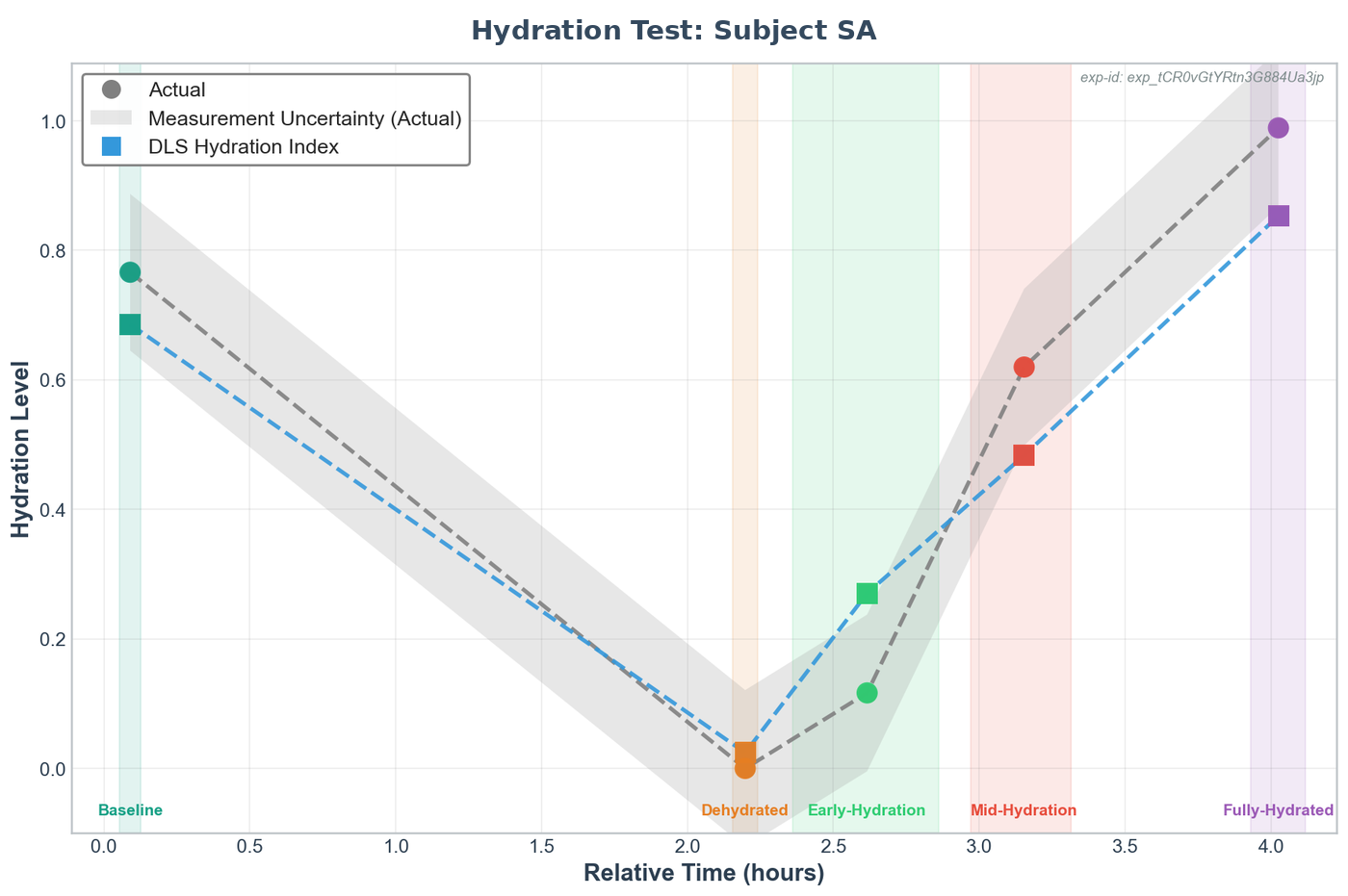

Yampa · Single-Subject Walkthrough

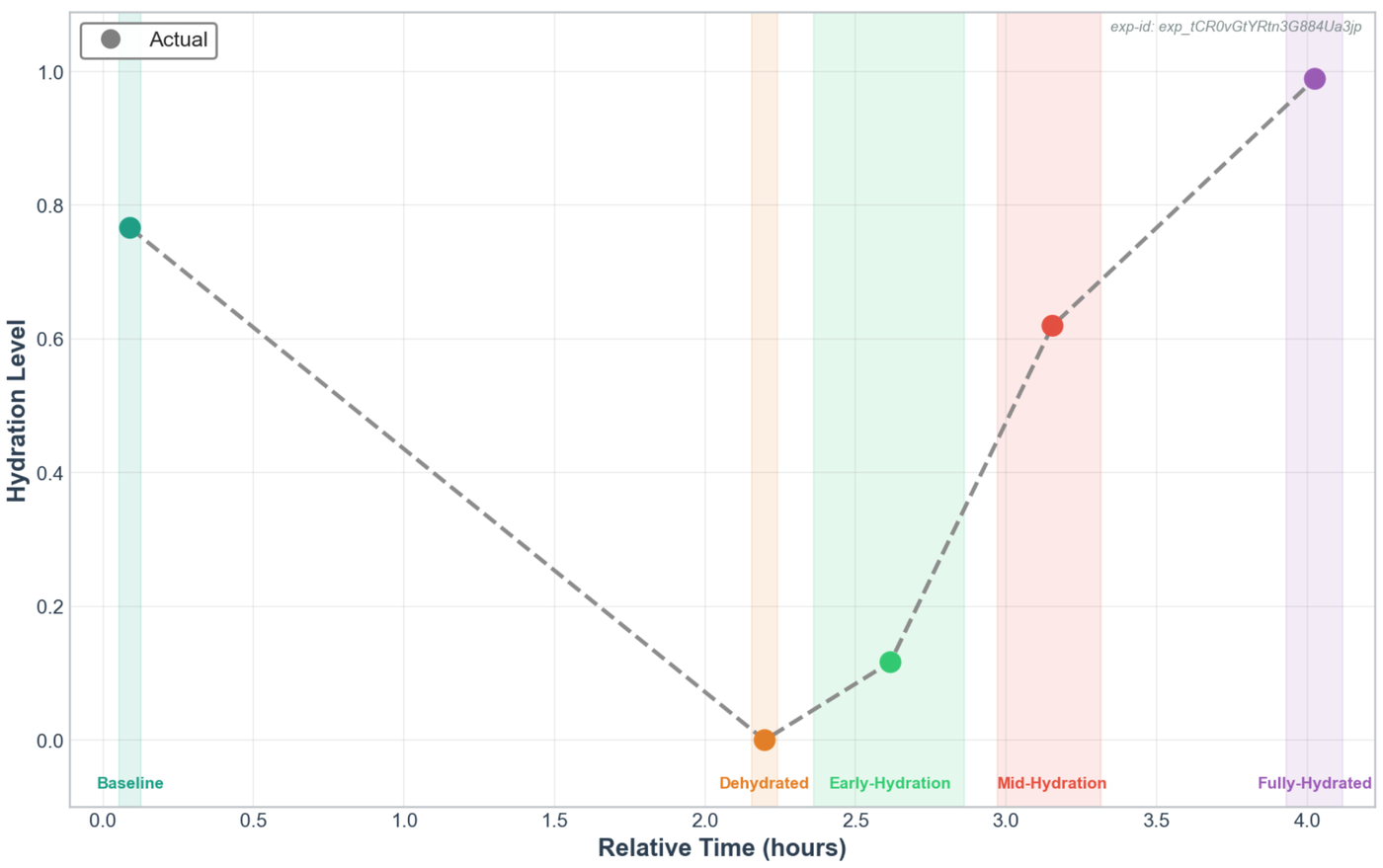

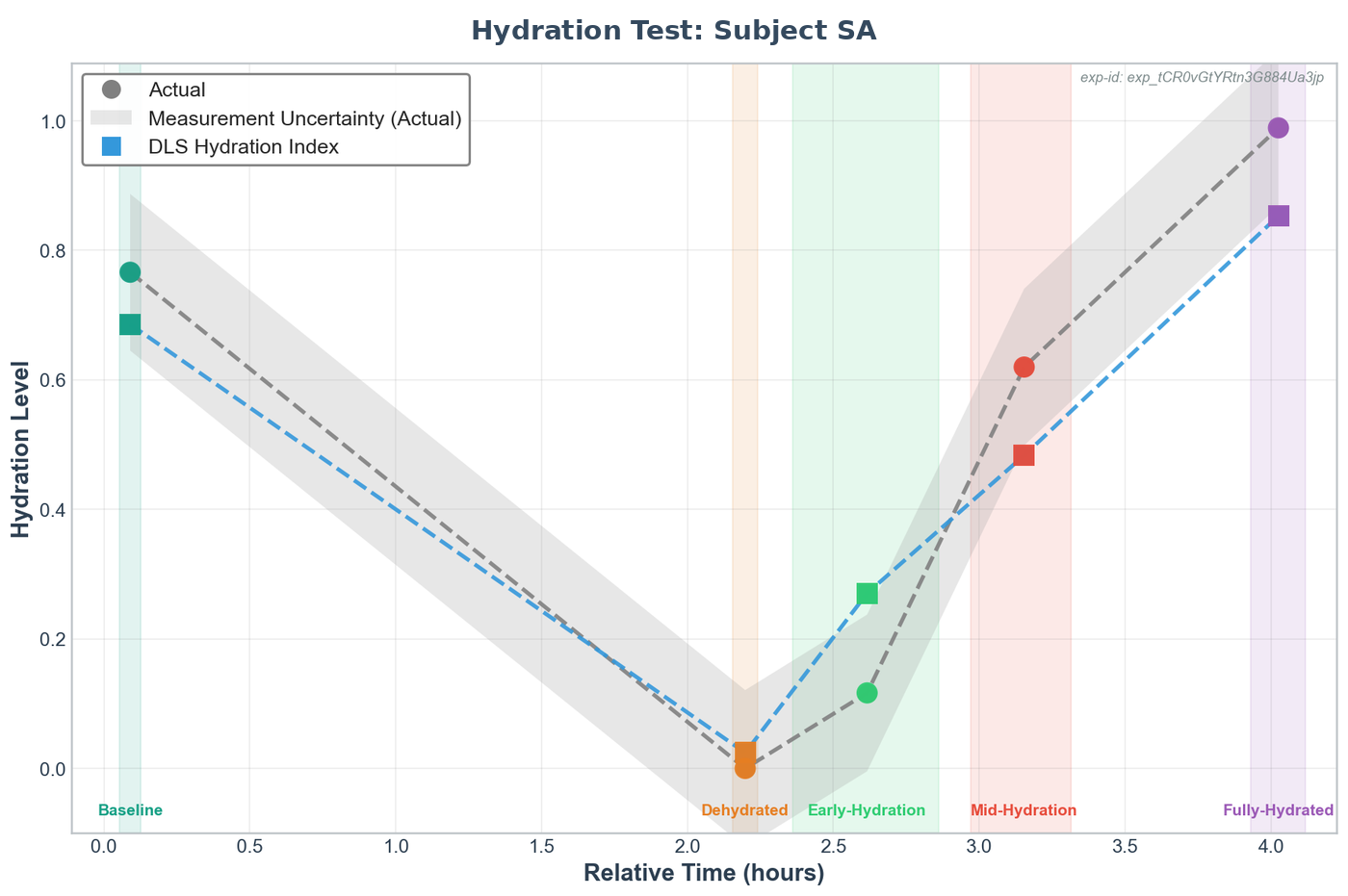

Yampa tracks the dehydration and rehydration curve for an individual participant — here, against weight-scale ground truth with uncertainty bands.

One representative participant's hydration test. The gray line is ground truth from the weight scale; the blue line is Yampa's prediction from PPG waveform features alone. The prediction tracks the full curve — baseline, exercise-induced dehydration, rehydration, and return to full hydration — on consumer-grade optical hardware, not lab equipment.

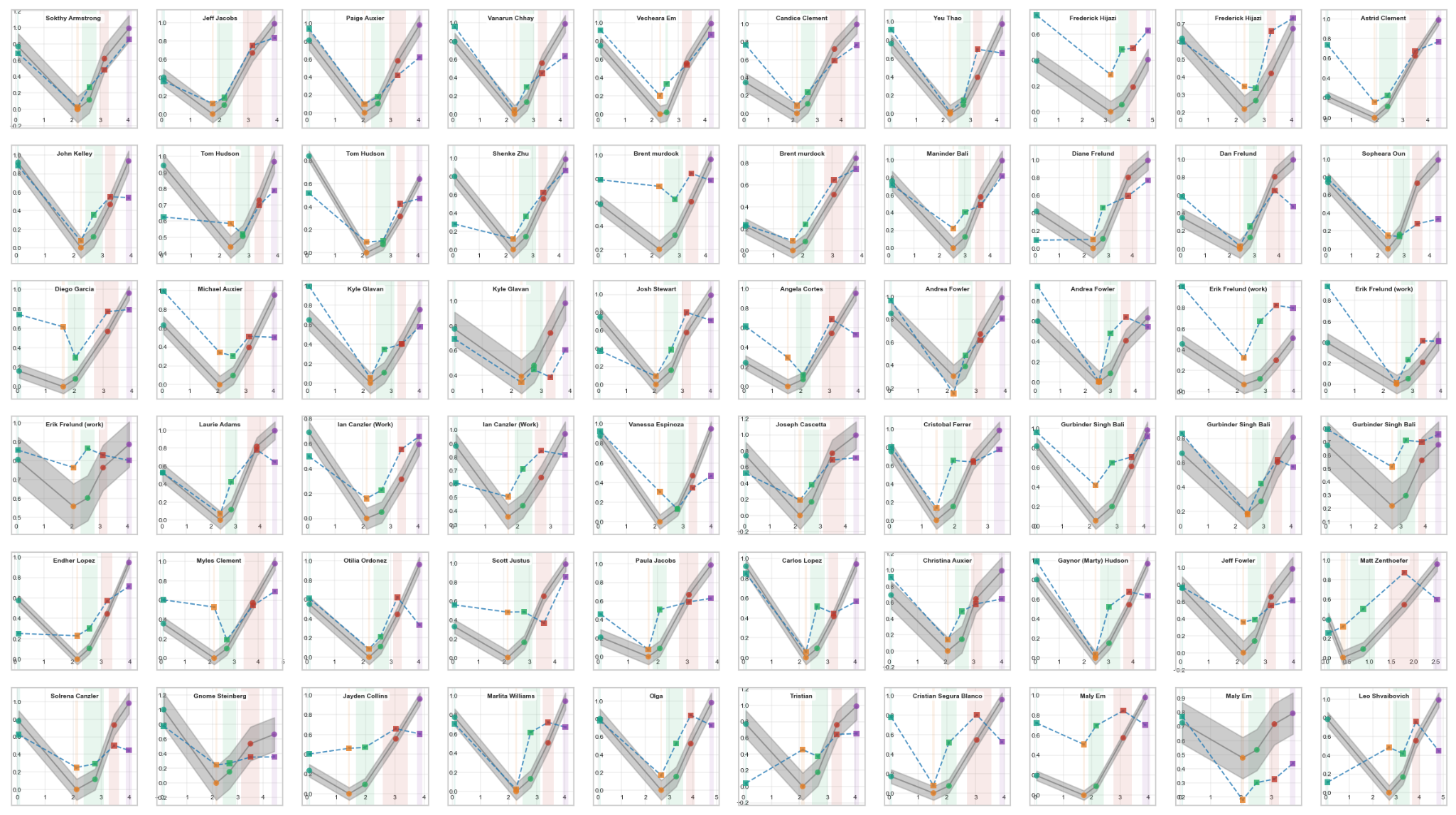

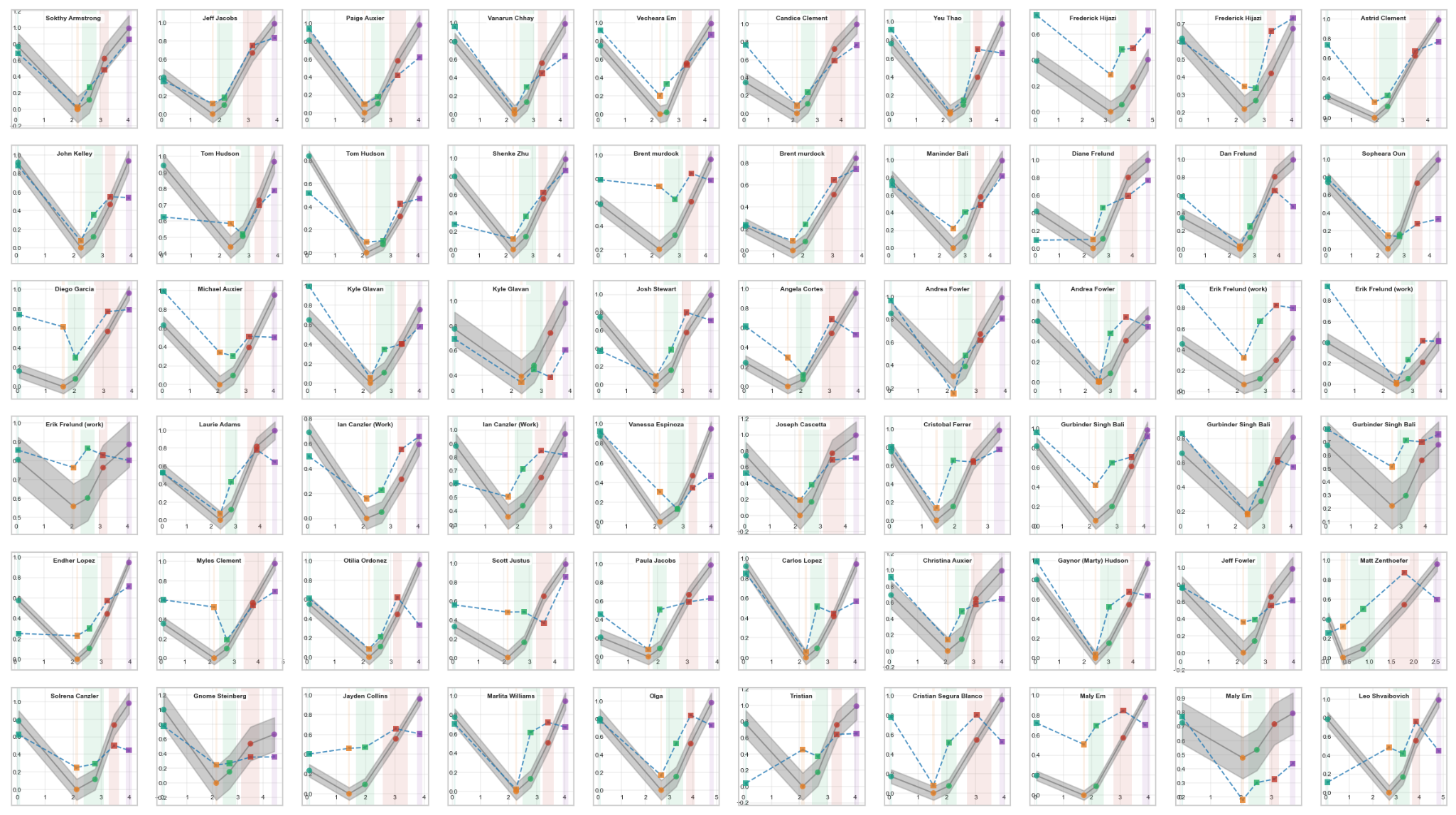

Yampa · Full Cohort

Every participant's prediction vs. ground truth — 60 subjects, validated via leave-one-subject-out cross-validation.

The full Yampa validation — every analyzed subject, each chart showing that participant's ground-truth curve (solid) against Yampa's prediction (dashed). Cross-validation excluded each subject from training before testing, ensuring the model had never seen their physiology before.

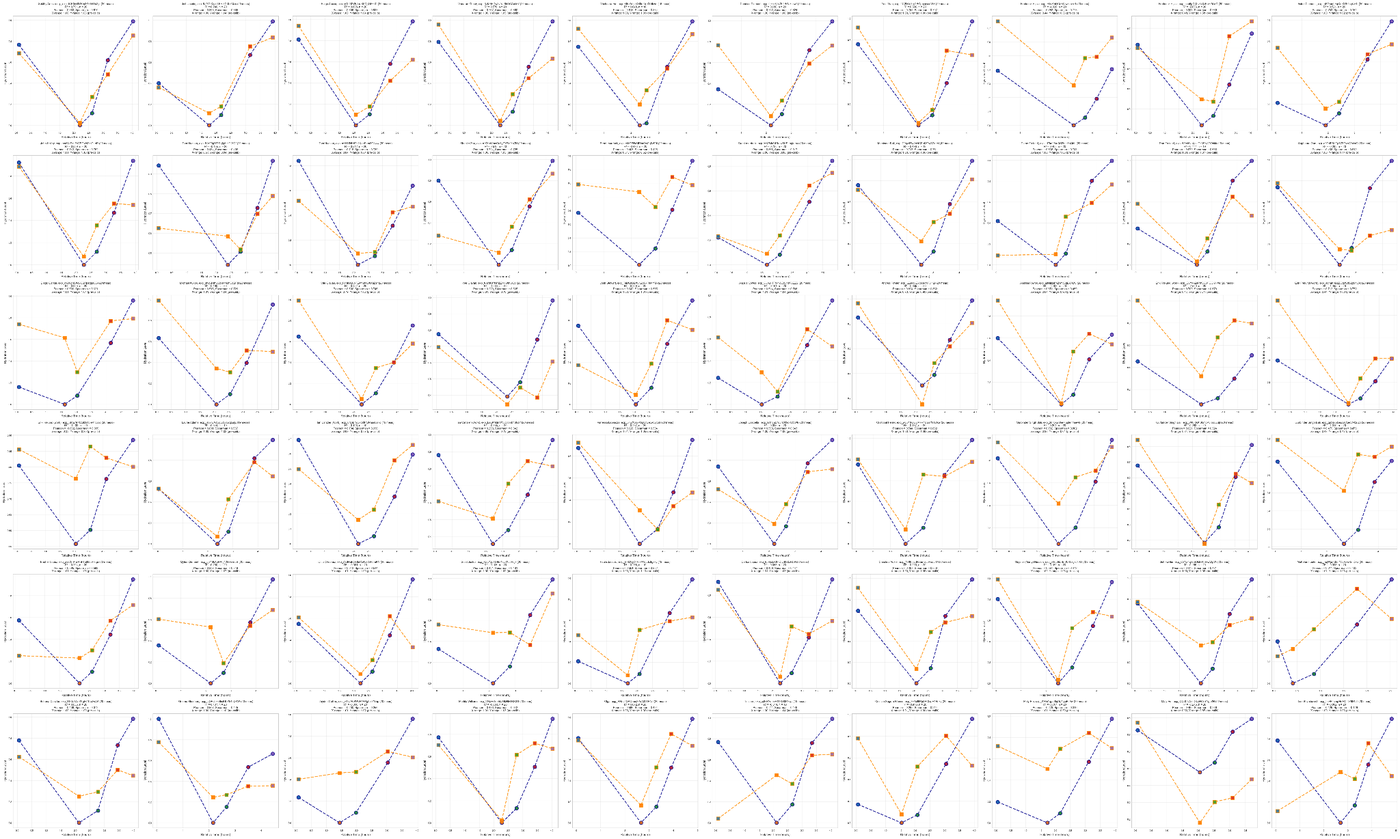

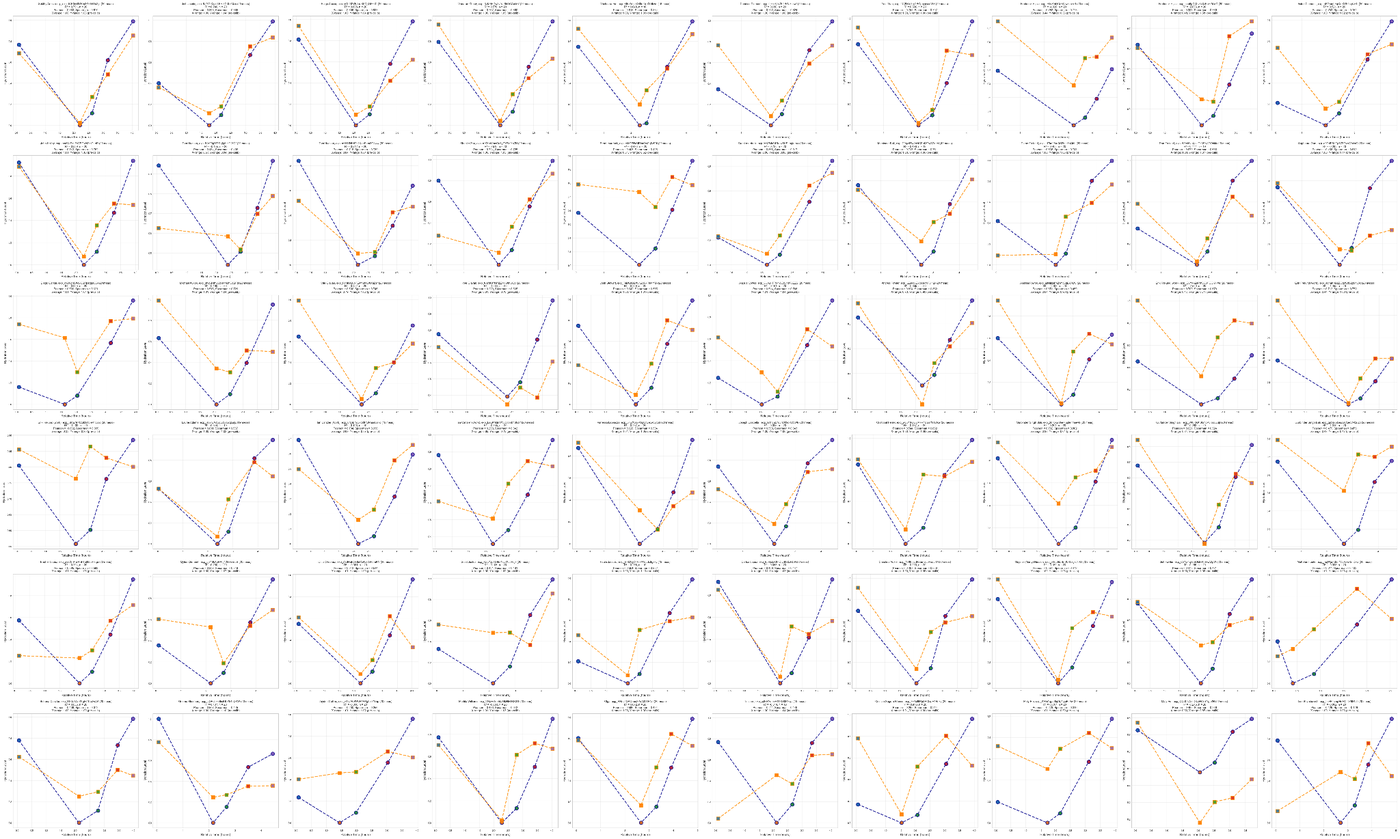

Yampa · Correlation Distribution

Trend correlation (Pearson r) between Yampa's prediction and ground truth across all 60 analyzed subjects.

Ranked per-subject correlations. 95% of analyzed subjects (57 of 60) show positive correlation; the majority correlate above 0.7; 25 subjects correlate above 0.9. The three outliers at the bottom were traced to protocol errors and app data-capture issues — not algorithmic failures, but they pointed to where v2 would focus.

Model v1 · Codename: Yampa

What Yampa established

Yampa is the proof point that made the category-creation argument credible. Before Yampa, the question was whether PPG waveforms on a wrist-worn device could track hydration at all. After Yampa, the question became how much better we could make it. The model aligns with the ~88% accuracy result Reljin et al. reported on PPG-based volume status (Reljin 2018) and with the broader Compensatory Reserve framework from US Army + Georgia Tech (Kimball 2022) — giving Yampa external scientific grounding, not just internal validation.

Yampa also surfaced exactly where the next generation would focus: demographic consistency (variance in accuracy across age and ethnicity), temporal stability (some predictions drifted more than the underlying hydration state changed), and harder-PPG robustness (subtle fiducial points on some waveforms weren't being located reliably). Rio Grande addresses all three.

04

v2 Rio Grande — the category leap.

Our second-generation model ("Rio Grande") is where the measurement becomes category-worthy. Three things had to be true: the model has to work across every population, predictions have to be reproducible over time, and the signal-processing foundation has to be defensible to technical scrutiny. Rio Grande delivers on all three.

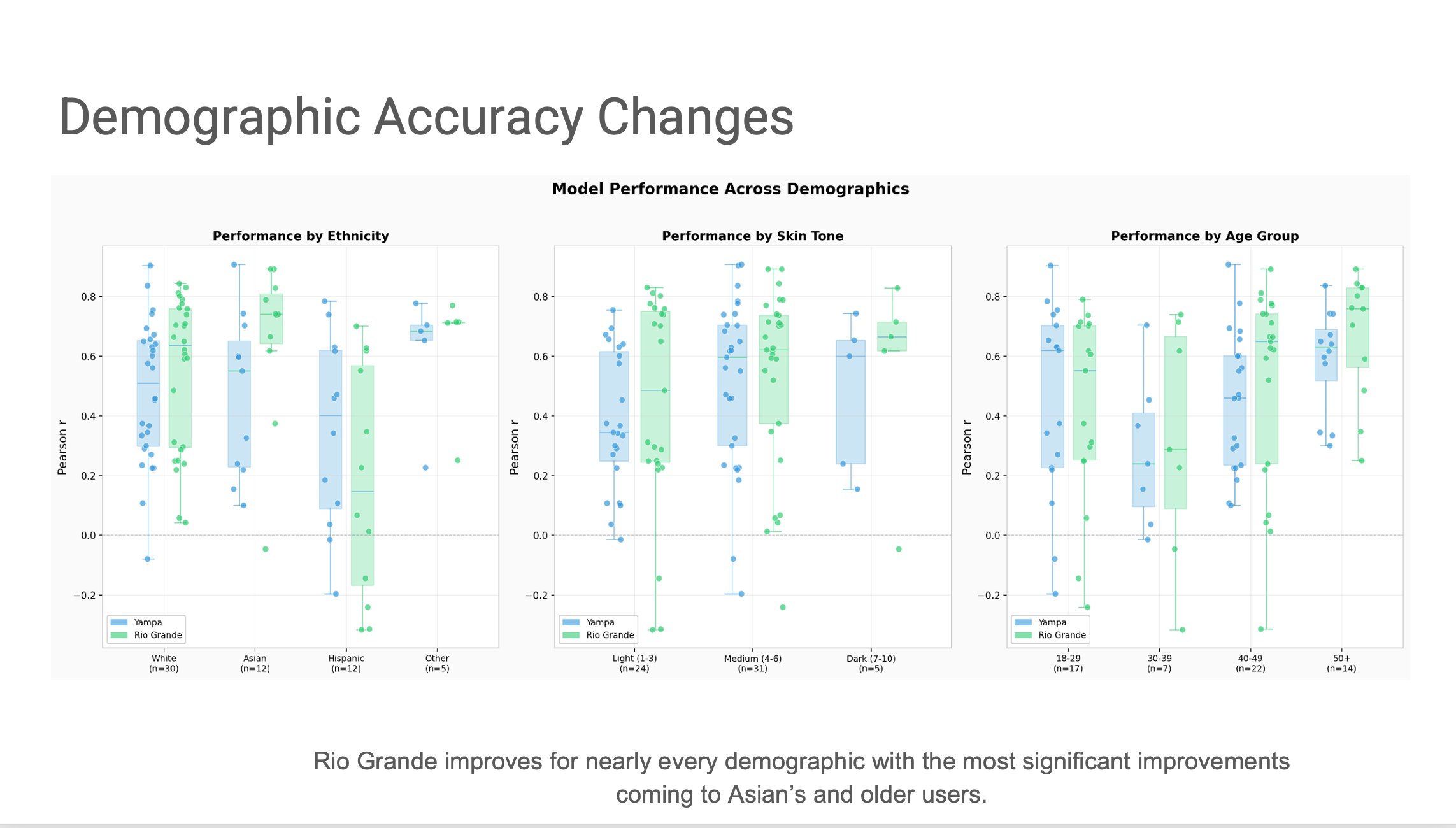

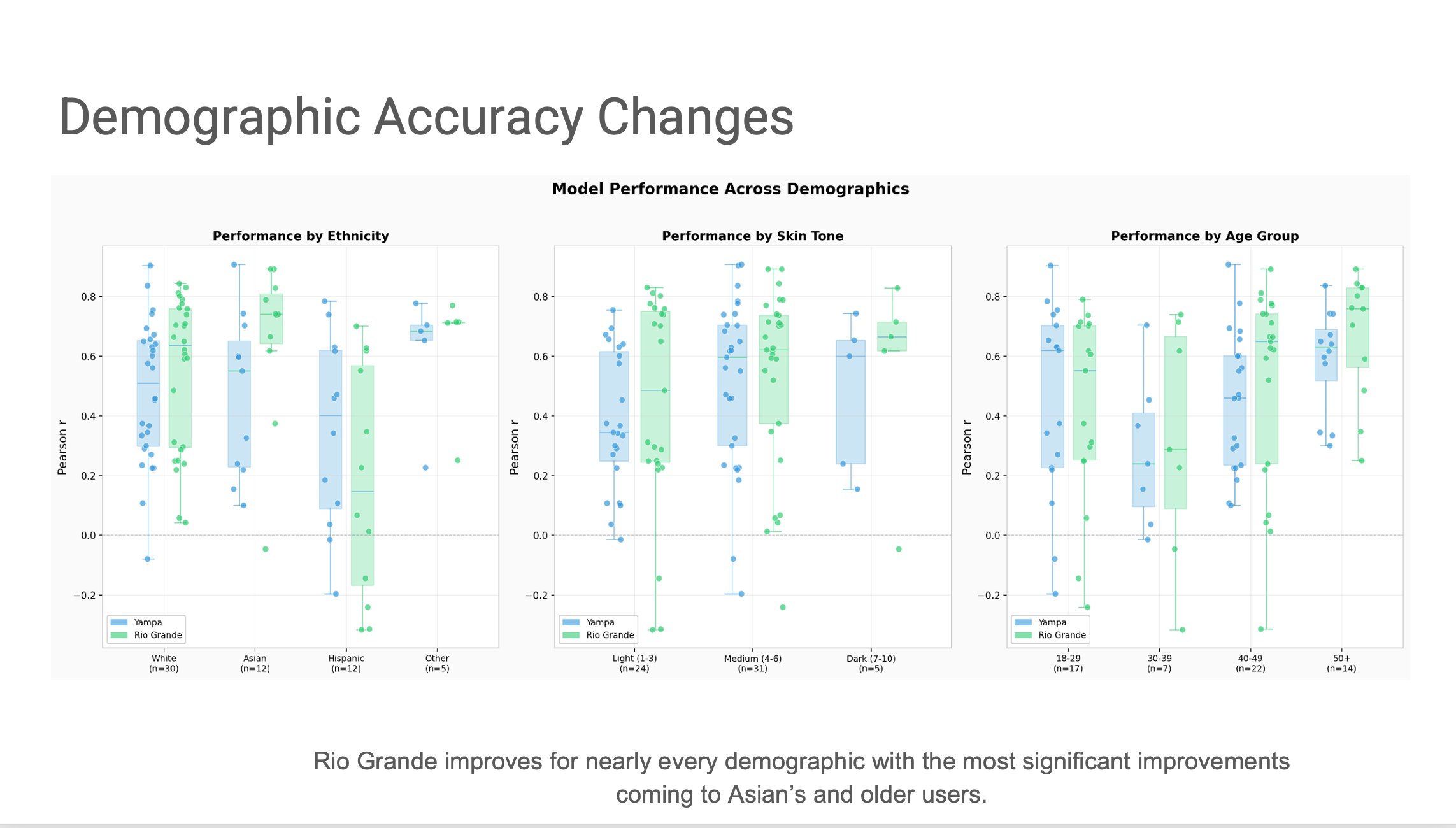

Evidence 1 · Works across populations

Rio Grande improves accuracy for nearly every demographic — with the biggest gains in Asian users and older users, exactly where Yampa had more variance.

Pearson r correlation between model prediction and weight-scale ground truth, across three demographic axes (ethnicity n=59, skin tone n=60, age group n=60). Yampa is blue; Rio Grande is green. A measurement category has to work across every population, not just the easy ones.

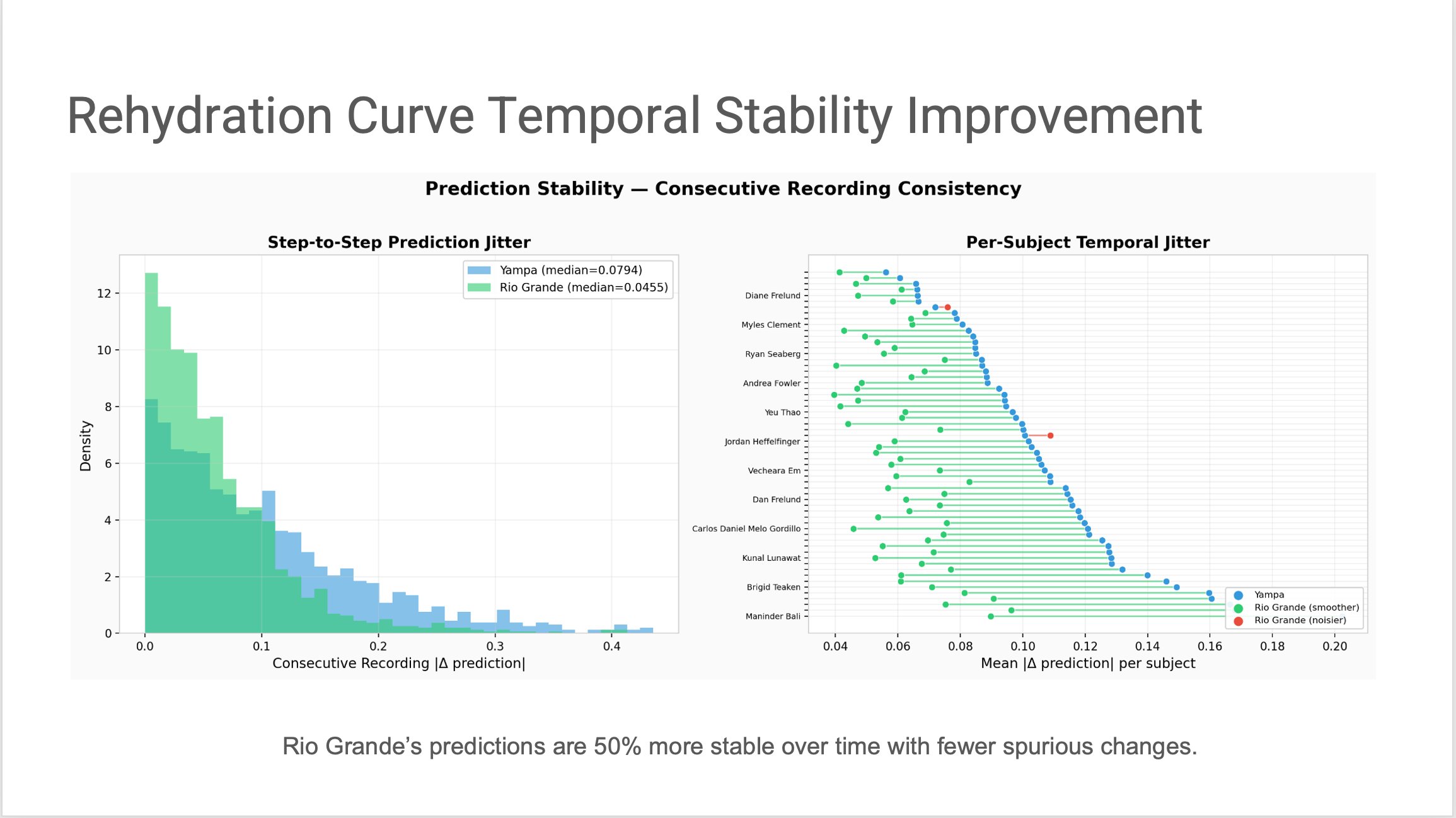

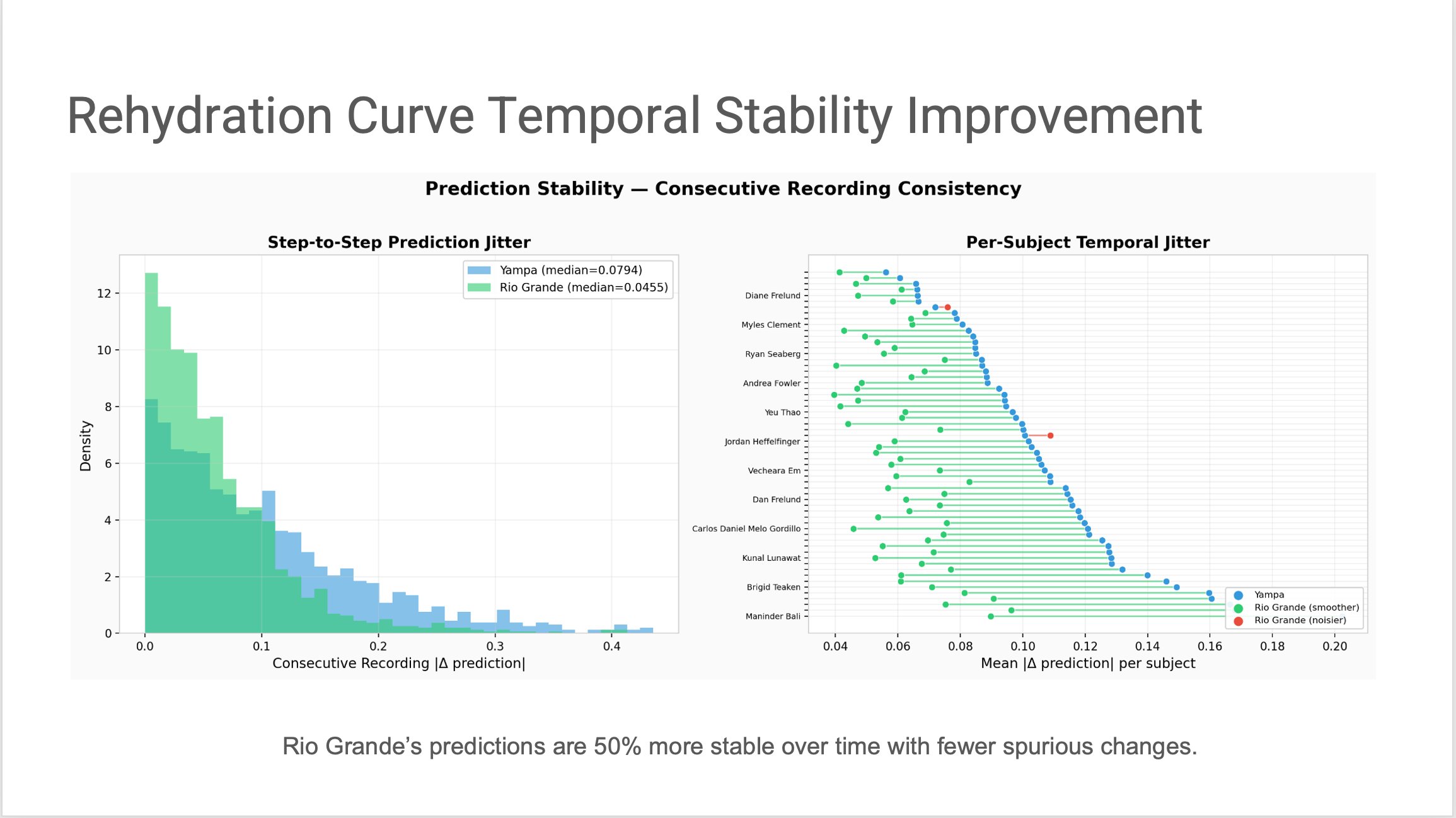

Evidence 2 · Reproducible over time

Rio Grande's predictions are 50% more stable between consecutive recordings — median step-to-step jitter dropped from 0.0794 to 0.0455.

Step-to-step prediction jitter (left) and per-subject temporal jitter (right). Lower is better. A measurement category has to be reproducible, not just correlated — a sensor that reads 72% hydration now and 68% thirty seconds later, with no physiological reason, isn't yet a sensor.

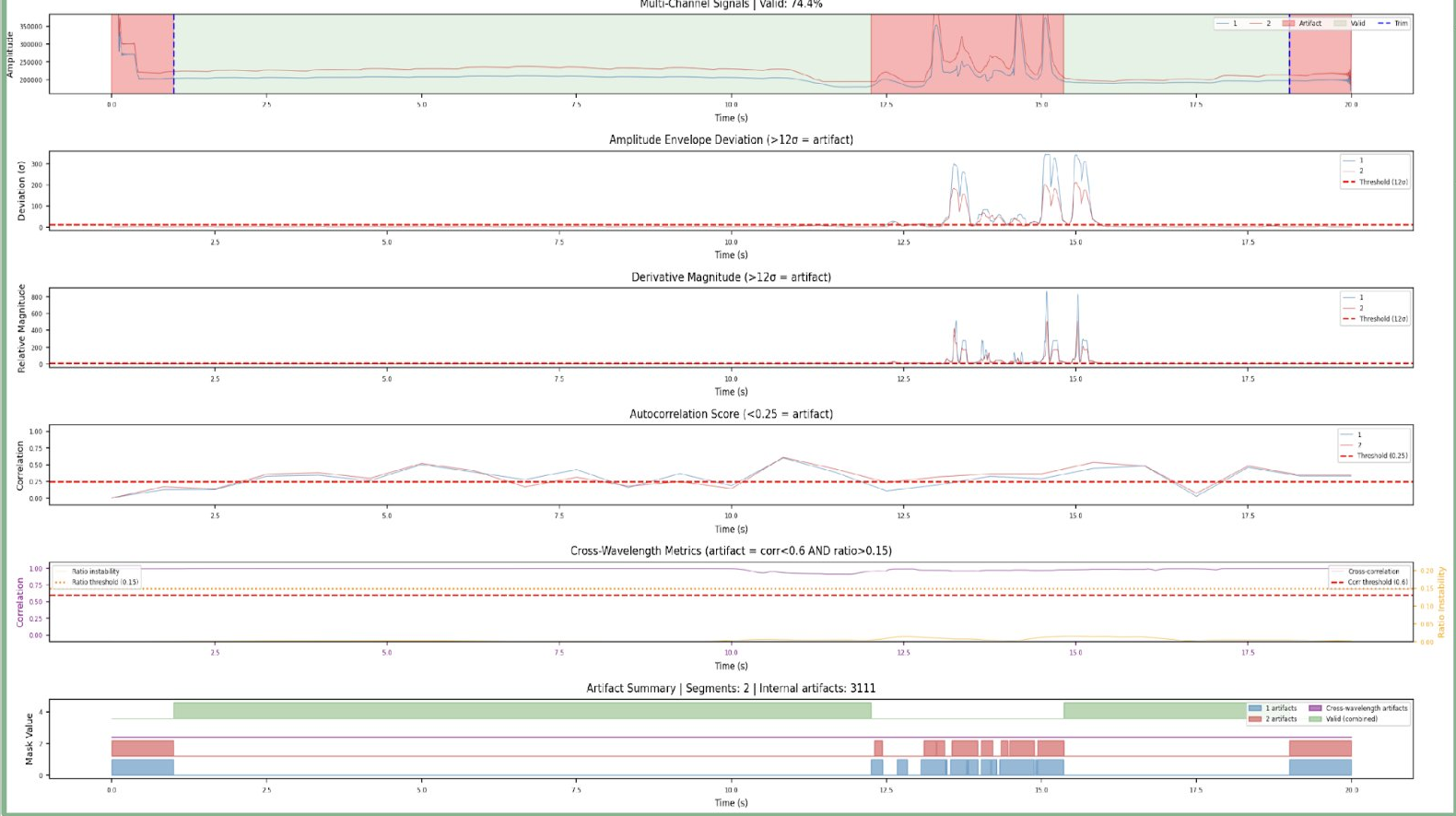

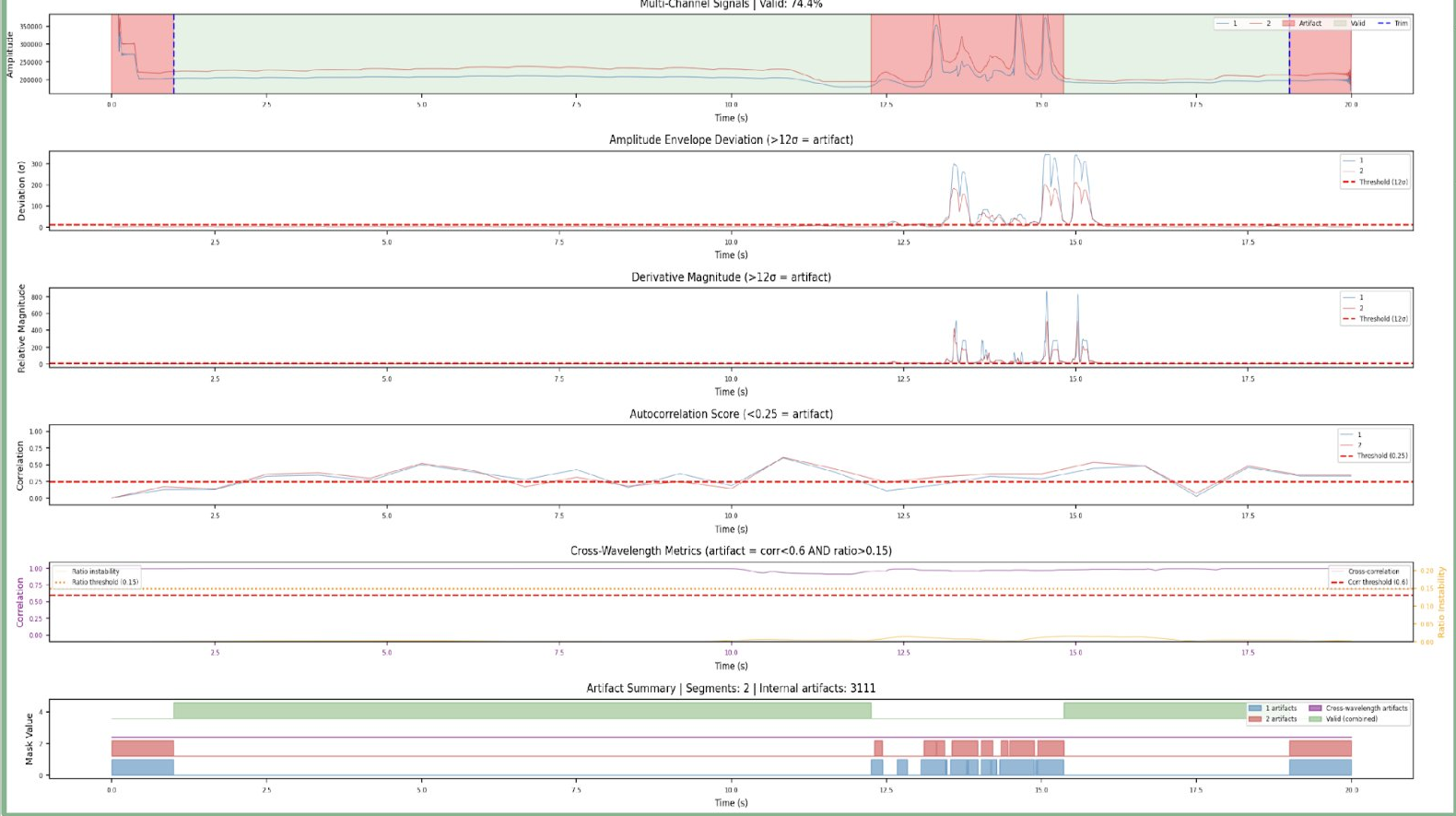

Evidence 3 · Artifact detection you can see

Rio Grande's motion-artifact detection preserves every clean section of PPG signal — while flagging and removing corrupted regions automatically.

A 20-second window of dual-channel PPG recording passed through Rio Grande's artifact-detection pipeline. Four independent detectors — amplitude envelope deviation, derivative magnitude, autocorrelation, and cross-wavelength ratio — each score the signal separately. Their combined output (bottom panel) identifies valid regions (74.4% of the window) against artifact regions (initial settling, motion spike mid-window, and pulse transients at the end). This preserves every clean second rather than discarding whole recordings, which is what lets Rio Grande produce predictions on shorter, real-world captures. — swipe the chart above to see all detector panels.

Artifact detection ensemble

Rio Grande detects motion artifacts using an ensemble of detectors and removes them, protecting downstream analysis from corruption. This lets us work with shorter recordings in more natural conditions.

Multi-resolution pulse segmentation

Twelve signal-preprocessing strategies run in parallel, with results unified downstream. Pulse segmentation failures on difficult PPGs dropped 85–95%.

Higher frequency cutoff

The new pipeline captures signal information up to 12–15 Hz, versus 8–9 Hz previously. Derivative-based features (vascular compliance) benefit from the additional high-frequency content.

Control-experiment feature discovery

Our control experiments surfaced features that are uniquely hydration-sensitive rather than exercise-sensitive — removing exercise as a key confounder for the first time.

Rio Grande · In the benchmarks

The numbers behind the claims.

In leave-one-subject-out cross-validation across the full 60-subject cohort and 1,510 matched recordings, Rio Grande improves the per-subject Pearson correlation with ground truth across every metric that matters. Mean correlation rises from 0.474 → 0.648, median rises from 0.556 → 0.791, and the number of subjects with strong correlation (r > 0.7) jumps from 13/60 to 37/60. Forty-nine of sixty subjects improve outright.

The subjects where Yampa's prediction was anti-correlated with ground truth — the hardest tail of the distribution — are the most honest test of the new pipeline. Rio Grande still has five; the underlying signal on those recordings is genuinely borderline. Everywhere else, the gain is unambiguous.

Model Performance Summary

| Metric |

Yampa |

Rio Grande |

| Mean Pearson r |

0.474 |

0.648 |

| Median Pearson r |

0.556 |

0.791 |

| Subjects with r > 0.7 |

13/60 |

37/60 |

| Negative Correlations |

3 |

5 |

| Subjects Improved |

— |

49/60 |

Rio Grande · Rejectionless prediction

We stopped throwing away hard pulses. We taught the model to read them.

Yampa's pipeline filtered out PPG pulses that looked atypical — a reasonable first pass, but in production it rejected too many recordings to be useful. Rio Grande replaces that filter with two new components: medoid sampling (the algorithm picks the most representative real pulses from a window rather than averaging them into something physiologically impossible), and probabilistic constraint-based fiducial detection (each candidate critical point on the waveform is scored by a factor graph of physiological constraints, then the best-scoring configuration wins). Together they let Rio Grande produce a prediction for every recording, with a principled confidence score, instead of silently dropping data.

This unlocked 80 new features — diastolic decay time constants, velocity and acceleration ratios, wavelet-based derivative energy, beat-to-beat variability — that Yampa's rejection-based pipeline was masking. The feature set behind v2 is not a refinement of v1; it's a new surface area the prior pipeline couldn't see.

Defensibility

7

Patents filed to date.

Covering the signal-processing foundation, fiducial detection approach, and multi-channel measurement framework. The measurement category we're building is ours to lose.